The Mainframe Paradox

The mainframe survived the web and the smartphone, and even grew because of them. What about AI?

Death To The Mainframe

“I predict that the last mainframe will be unplugged on March 15, 1996,” tech writer Stewart Alsop declared back in 1991. As reasonable as it might have seemed at the time – over a decade into the Personal Computers revolution, and with IBM approaching near-bankruptcy territory – that prediction turned out to be wrong. Alsop himself admitted so in 2002, with a picture of himself eating his own words.

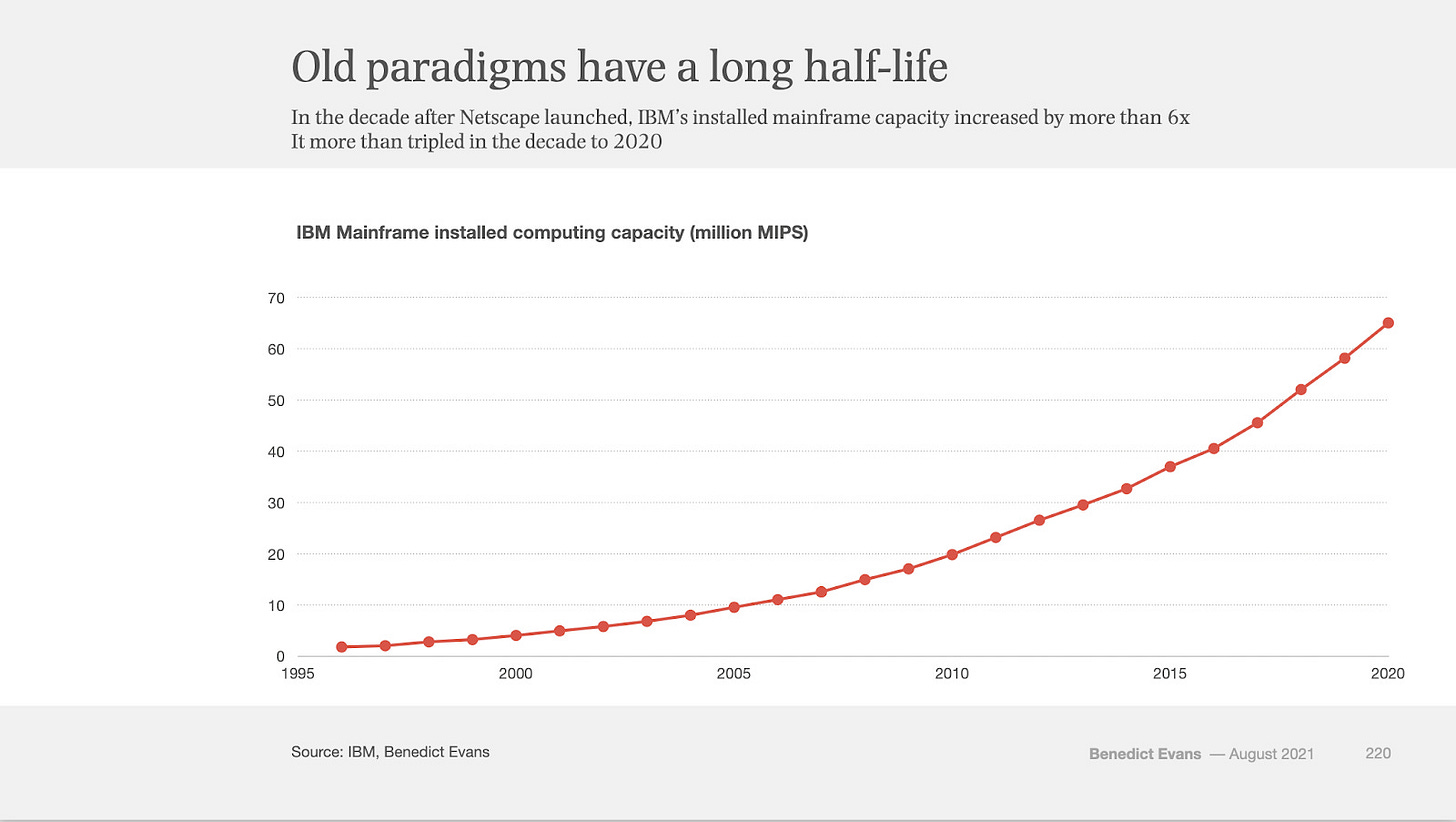

What’s interesting is that not only did the mainframe survive – thirty years past Alsop’s 1996 deadline – it grew substantially; Benedict Evans explained in 2020:

The funny thing is, though, that mainframes didn’t go away [...] IBM’s mainframe installed base (measured in MIPS [Millions of Instructions Per Second]) has grown to be over ten times larger since 2000. Most people working in Silicon Valley today weren’t even born when mainframes were the centre of the tech industry, but they’re still there, inside the same big companies, doing the same big company things. [...] Mainframes carried on being a good business a long time after IBM stopped being ‘Big Blue’.

And here’s what surprised me most: the rise of new computing paradigms – first the PC, then the smartphone – is what drove the mainframe’s growth.

While the pipeline of new mainframe customers dried out as enterprises adopted the PC and the client/server paradigm during the 1980s, there was still a significant pool of existing customers – government agencies and large companies, built over the previous decades when IBM dominated the entire tech industry.

If you’d asked me back then, I would have assumed a trench warfare strategy: IBM jacking up prices in an attempt to extract as much money as possible from every customer before their inevitable migration to a more modern infrastructure. Milking the mainframe ice cube as it slowly melted. In line with Alsop’s 1991 prediction. The reality, however, turned out to be very different.

When the web arrived in the 1990s, traditional companies — banks, airlines, utilities, insurers — built websites and, during the 2010s, mobile apps. The customer-facing interfaces were new, but the underlying systems weren’t. Behind every “view balance” tap and every flight search, at the end of the call stack, was a mainframe. The mainframe remained the system of record (yes, that phrase); the new digital products triggered far more transactions against it, thus increasing the mainframe’s significance.

An IBM paper from 2014 walked through this dynamic. A customer who used to check their balance on the ATM once a week was now opening a banking app every day; that gets particularly tricky on paycheck day – in addition to the need to update all the customer balances at the same time, they also tend to log in simultaneously to see if they got paid.

And that’s just one example. The rise of e-commerce and mobile payments created an order of magnitude more electronic transactions to process; Visa alone increased its volume by 7x between 2008 and 2025. Flight booking systems originally designed to power a limited group of travel agents became highly stressed once services like Skyscanner or Google Flights allowed billions of smartphone users to constantly check for flight fares.

The web and smartphones drove a 10x increase in MIPS between 2000 and 2020. But that didn’t push organizations to migrate off their mainframes. The opposite: it gave IBM something new to sell them. More performant machines to handle the increased load. As well as auxiliary products such as load balancers, read-only replicas, and storage solutions.

It’s an interesting paradox how the PC and Mobile eras made the mainframe obsolete, but at the same time, increased its importance and made it grow.

How to lose a monopoly

The same paradox repeated with later paradigm shifts: It was Microsoft and Intel who – due to IBM’s fatal mistake of outsourcing the operating system and processor of its PC to them – conquered what was previously IBM’s dominant position. Over 90% of desktop computers were running Windows. Then came the World Wide Web. While Microsoft defeated Netscape in the web browser wars of the late 1990s, Google leveraged the web to disrupt the desktop app paradigm and dismantle the strategic importance of the Windows API. Guess what happened, however, as the Microsoft Windows moat was eroding – from How to lose a monopoly by Benedict Evans:

The web removed most of Microsoft’s levers of power, but it also sold a lot of PCs - for the first time there was a real reason for a ‘normal’ person to buy a computer. Hence, in 1995 there were perhaps 100m PCs on Earth, and three quarters of them were in offices, but today there are 1.5bn. If you wanted to get online, you needed a computer, and with Apple prostrate and Linux never quite managing to produce a consumer product, Windows was the only real option.

The web ended Windows’ dominance, while also driving a short-term explosion in Microsoft Windows sales; same paradox!

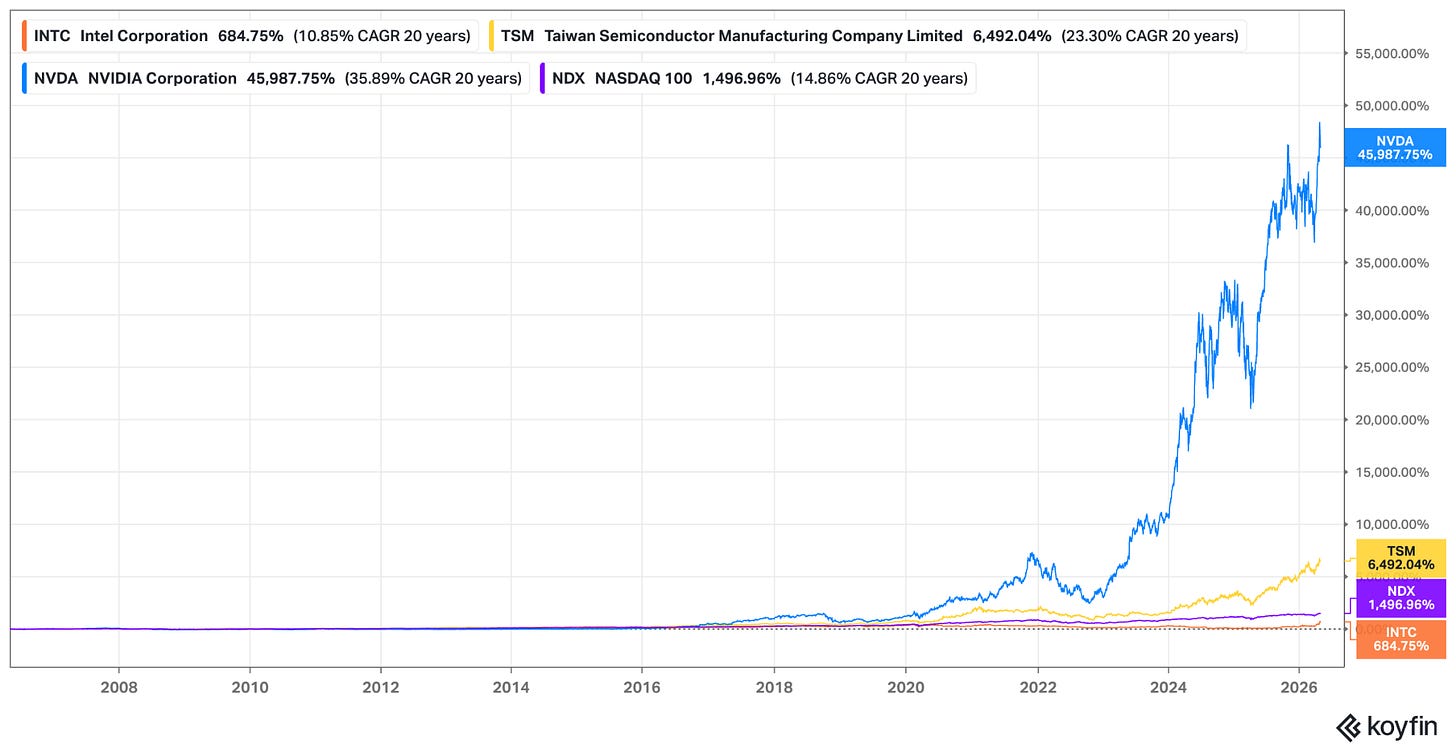

What about Intel? Unlike Microsoft, it wasn’t hurt by the web. On the contrary: its dominance in desktop computer chips translated into conquering the highest-margin segment of the CPU market: computer servers1. But Intel did miss the next paradigm shift: Mobile. It was TSMC who manufactured the smartphone processors, eventually allowing it to take over Intel’s position in leading edge semiconductors during the 2010s. Intel’s financial performance, however, was fine even while it was losing its dominance, as Ben Thompson noted in Stratechery:

Missing out on that [Mobile] volume set the stage for Intel’s decline as a manufacturer, but Intel shareholders didn’t feel the pain because Intel was selling the chips that powered the data centers that [...] made mobile computing viable and compelling.

Mobile apps needed a cloud backend to work against, which meant Intel could sell far more server CPUs. And so while Mobile eventually cost Intel its lead, it also increased demand for cloud computing. Same paradox2.

With the AI Scare recently causing stocks to crash in sector after sector, you might find comfort in these examples, where a new technology took away the moat of once-leading companies, while at the same time boosting their revenue for several years. None of these stocks, however, has been a smooth ride. Leading companies don’t typically accept their transition into a niche player with grace and humility; instead, they deny it, they fight it, they make huge acquisitions that don’t make sense, and insist on bad strategic bets. Andy Grove called it the inertia of success, and likened it to the psychology of individuals experiencing a serious loss and going through the five stages of grief.

That’s essentially what brought IBM to the edge of bankruptcy in 1993, and later caused its stock to experience a lost decade in the 2010s (while the NASDAQ and S&P500 were ripping). That’s why Microsoft’s stock went nowhere in the first 15 years of the new millennium, as Steve Ballmer kept centering his strategy around Windows. Intel’s stock may have had its runs during the cloud heydays, and recently with AI, but it has still been significantly behind the NASDAQ index, not to mention the rallies of TSMC and Nvidia – the new industry leaders.

So this serves as a warning: while the mainframe paradox demonstrates how well-entrenched tech businesses may last – and even grow – despite losing their dominant position, they might get buried under a pile of bad capital allocation decisions.

AI and the Mainframe

All of this leads to the question of what AI does to the mainframe; IBM stock has recently been hit by the AI scare trade, as CNBC reported in February:

International Business Machines stock is getting slammed Monday, becoming the latest perceived victim of rapidly developing AI technology, after Anthropic said its Claude Code tool could be used to modernize legacy systems that run COBOL. Shares of IBM closed the day lower by nearly 13.2%.

While past initiatives by AWS, Google Cloud, and Azure to move mainframe workloads onto their platforms did not make a dent in IBM’s mainframe business, the fear was that AI – specifically, coding agents automating the migration of complex legacy systems – would be able to finally break through.

Or would it? “AI strengthens the mainframe case, it does not weaken it,” IBM’s senior vice president of software, Rob Thomas, argued on LinkedIn in response. He explained that translating COBOL code into another programming language isn’t enough to migrate these highly complex and interdependent systems onto a whole different infrastructure, similar to how vibe coding an iOS duplicate won’t displace a billion iPhones.

Moreover, AI provides the ability to refactor and modernize legacy code, which in turn allows customers to get more out of their mainframes: with the engineers who originally wrote the code long retired, and not many new COBOL developers entering the job market – AI allows modern engineers to finally translate and refactor what might have previously been considered a black box that nobody touches.

The earnings IBM reported last week seem to support this case: during Q1 2026, IBM Z mainframe hardware revenue was up 51% YoY, following 67% growth last quarter. This is due to an AI-driven upgrade cycle, and while the growth in hardware revenue would eventually fade, it is expected to lead to more revenue in other parts of the business, as IBM CFO James Kavanaugh explained on the last earnings call:

Let me give you a stat. We just anniversaried our first full year of z17. That first full year is z17 versus the prior program z16 first full year, which by the way, was the best on record at that point in time. We’ve increased hardware placement value by over $1 billion. Now, you take that $1 billion and you think about the future monetization opportunity that we get. That’s that 3x to 4x multiplier that will play out over time. A big chunk of that being our TP software, but it’s also our storage attached. It’s our maintenance business, it’s our financing business. We monetize that value based on how many MIPS are shipped in the market. And for four quarters in a row on z17, we’ve shipped over 100% growth of new MIPS in the market, including the first quarter.

IBM CEO Arvind Krishna pointed at another way AI is benefiting the mainframe business: like every enterprise, mainframe customers want to incorporate AI into their workflows. What’s challenging, however, is that most of the LLM products are hosted in one of the major clouds, while many of the world’s largest banks, retailers, and airlines, still have a lot of their data and workloads in mainframe computers. So IBM put a dedicated AI accelerator chip into the z17, allowing inference to run on the same hardware where the data is stored and transactions are being processed. Krishna also claims that having AI inference capabilities within the mainframe also reduces security and availability risks, and named some examples:

ServiceNow is leveraging watsonx [IBM’s AI coding agents] for automated data quality and observability to deliver AI-ready data and code generation to refresh legacy applications to modern application runtimes, including ServiceNow. Visa continues to work with IBM on ongoing software and data modernization efforts, supporting the scale, resiliency, and performance of VisaNet. With Nestlé, we are using NVIDIA accelerated watsonx.data to embed AI directly into core order to cash operations, enabling faster real-time insights across Nestlé’s global supply chain.

More color was also provided around Thomas’s argument on AI coding agents, as Krishna noted that “clients who have deployed watsonx Code Assistant for Z are growing MIPS capacity three times faster than those who have not.” The same AI coding capability the market thought would migrate workloads off the mainframe is, according to IBM, increasing mainframe usage.

Looking back at the chart from Ben Evans above, it’s almost hard to believe that MIPS capacity grew 10x from 2000 through 2020, and it’s even wilder to imagine AI driving another 10x jump. But that’s what the evidence so far is pointing at.

To be clear, the mainframe isn’t IBM’s entire business; this post doesn’t discuss its hybrid cloud business, nor a couple of big acquisitions made recently. I hope to explore those in another post.

What is worth mentioning is that unlike during the early days of the PC, or the onset of the cloud, present day IBM seems pretty humble and cognizant of its place in the tech industry food chain. It seems focused on serving its share of the market, and leveraging AI to grow within that segment. Which means that the AI paradigm shift might be a smoother sail for IBM. As long as the mainframe paradox keeps working.

Not financial advice. This post is for educational and general purposes only and should not be relied upon for investment decisions.

Thanks to Google, who pioneered the model of distributed fault-tolerant computing running on commodity hardware (think: Intel x86 PC processors)

Yes, that there is a very interesting dynamic currently going on with Intel around AI. I’ll save that for a different post though.